Contents

2.5.1 Correlating Security and Event Information

Security Information and Event Management Systems (SIEM)

These have 2 major functions:

- Central, secure collection point for logs. Administrators configure all serves, network devices and applications to send logs directly to the SIEM. It stores them in a secure fashion.

- They can apply AI techniques to correlate all of those logs and detect patters of malicious activity.

The great thing about an SIEM is that it has access to all of the logs across the organisation. In a hierarchical organisations network engineers might have access to firewall logs, system engineers might have access to operating system logs, and application engineers may have access to the application logs. this silent approach means that attacks may go unnoticed if the signs of the attack are spread across multiple departments. Each a administrator may see a piece of the puzzle but cant put the whole picture together.

The SIEM has access to all of the pieces of the puzzle and performs an activity known as log correlation to recognise combinations of activity that may indicate a security incident.

For example:

- an IDS might notice the unique signature of an attack in inbound network traffic which triggers an event within the SIEM that pulls together other information

- a firewall may note an inbound connection to a web server from an unfriendly country

- the web server might report suspicious queries that include signs of an SQL injection attack

- the database server might report a large query from a web application that deviates from normal patterns

- a router might report a large flow of information from the database server to a system located on the internet

As you can see from this a pattern of suspicious activity emerges.

Tuning and Configuring SIEMs

There are a number of tasks you need to do to get your SIEM working properly.

- Point the relevant logs to the SIEMs log repository. This centralised repository is configured as a WORM (Write Once Read Many) repository. This means the log is permanently recorded and cant be tampered with. this prevents a malicious user from covering up their tracks.

- Synchronising system clocks. When you are trying to re-construct events you want to put things in the correct sequence. This can be difficult if the log time stamps are not in sync. The easiest solution is to use the Network Time Protocol (NTP) to quickly and easily sync all the system clocks in an organisation.

- SIEM performance tuning. Once it is up and running you need to tweak and tune it. You don’t want to many false positives so you may need to modify the rules. You may also disable trivial and irrelevant alerts so they don’t waste administrators time.Out of the box the SIEM can cause a lot of alerts that may cause the administrators to start ignoring them.

2.5.2 Continuous Security Monitoring

This takes monitoring to the next level. Instead of reviewing past logs, it monitors the logs in real time and can take action in response to suspicious events.

3 Characteristics of a continuous monitoring approach

- It should map to the organisations risk tolerance. You must sure the monitoring activities are appropriate for your environment.

- Adapts to ongoing needs. Security is an ever changing field and monitoring processes must adapt to those needs.

- Actively involves management. Management must play an active role in continuous monitoring activities, providing leadership and resources.

6 Steps of the continuous monitoring process

- Define a continuous monitoring strategy

- Establish a monitoring program by outlining the metrics to use and the frequency at which we will monitor and assess

- Implement the program by collecting metrics, performing assessments and building reports. These tasks should be automated when possible

- Analyse/report findings from the collected data

- Respond to those findings by mitigating, avoiding, transferring or accepting risk

- Review and update the monitoring program, adjusting the strategy as needed

Monitoring Techniques

Anomaly Analysis: looks for data points that stand out from the rest of the data as clear outliers. EG: a large spike in bandwidth utilisation over a period of time

Trend Analysis: looks for changes over time. EG: increase/decrease in the number of accounts that have been compromised. This could warrant an investigation

Behavioural Analysis: this looks at the activity of users and identifies suspicious actions. EG: monitoring when users log into the system (maybe between 8am -5pm) , and then it would report if users are logging in at 3am

Availability Analysis: monitors system status and detects periods of downtime

2.5.3 Data Loss Prevention (DLP)

Unwanted disclosure of sensitive information from an organisation (employee personal information, client health information etc…) could expose the organisation to fines, sanctions and reputation damage.

Data Loss Prevention, or DLP solutions provide technology that helps an organisation enforce its information handling policies and procedures in order to prevent data loss and theft.

DLP solutions search systems for stores of sensitive information that might be unsecured and they also monitor network traffic for potential attempts to remove sensitive information from the organisation. DLP solutions can act quickly to block transmissions before damage is done and alert administrators to attempted security breaches.

Types of DLP Systems

- Host Based DLP: uses software agents installed on a single system that searches the system for the presence of sensitive information (social security numbers, credit card numbers, drivers licence numbers etc…). Once detected, security administrators can then take action to either remove this information or secure it with encryption. this method can also monitor system configurations and user actions, blocking undesirable actions such as using USB removable drives to prevent users carrying information out of the organisations secure environment.

- Network Based DLP: this system focuses on network connections and monitors outbound traffic for the transmission of any unencrypted sensitive information. They can then block this transmission of data, or in some cases apply encryption to the content. This is often the case with email.

Example of DLP in use

Spirion is a type of DLP that the tutor does an example with in a video:

- it uses “Pattern Matching” and scans the hard drive for drivers licence numbers, social security numbers, and credit card numbers

- It finds a “Rosters.csv” file that has drivers licence numbers

- you then have the option to “Shred” the file (permanently delete it), redact the sensitive information in the file, encrypt the file, or ignore the warning

Cloud Based DLP

This option offloads the burden of managing a DLP to a third party cloud hosted solution.

2.5.4 Network Access Control (NAC)

Network administrators need to restrict network access to authorised users only and ensure that users have access to only the resources they need.

What is NAC?

NAC technology intercepts network traffic coming from devices that connect to a wired or wireless network and verifies that system and user are authorised to connect to the network before allowing them communicate with other systems.

NAC uses the authentication protocol 802.1x

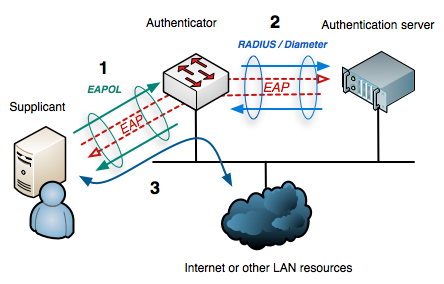

802.1x Process

- The supplicant (client) connects to the authenticator (a switch or wireless controller)

- The supplicant sends its credentials to the authenticator

- The authenticator sends these to the authentication server (RADIUS) for verification

- If the credentials are authentic the authentication server sends a RADIUS accept message and the device is allowed access the network. If the credentials don’t verify correctly it sends a RADIUS reject message

Role Based Access

Once the authenticator learns the identity of the user it can make a decision about where to place the user on the network based on policies (EG: put them on the staff or student VLAN).

Posture Checking (also known as Health Checking)

Posture checking ensures that client devices connecting to the network comply with the organisations security policy. This checks things like:

- A/V software installed

- Validate the A/V software has the current signatures

- Ensure the device has a properly configured firewall

- Verify the device has recent patches

Posture Checking comes in 3 different forms:

- An agent is installed on each endpoint. This agent communicates with th NAC controller

- Dissolvable agents: when the client tries to connect to the network they are directed to a portal that has them download a temporary agent that runs on their computer, performs posture checking, and is then removed on the completion of the NAC process

- Agent-less Approach: the NAC monitors network traffic and inspects configurations to determine enough information to make a decision about network access

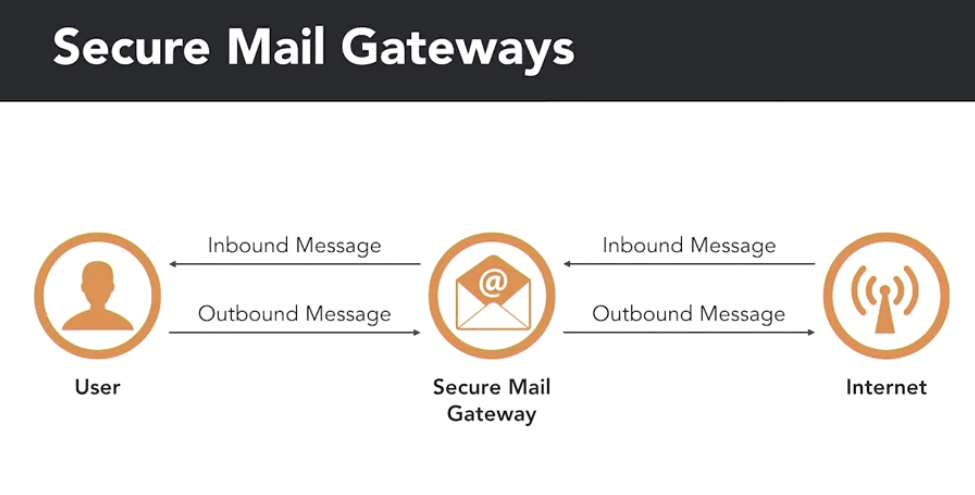

2.4.5 Secure Mail Gateways

Secure Mail Gateways sit between email users and the internet. They serve as SMTP relay server for external mail coming into the organisation. Mail hits the Secure Mail Gateway first, then gets relayed on to the mail server. the same thing happens for internal mail going out.

This position on the network allows the gateway to intercept and scan messages before passing them on. When a gateway scans a message it may be do one of 4 options:

- If it meets the security policy it sends the message to its destination

- If the message is definitely malicious it blocks it or puts it in the quarantine zone

- If a message is suspicious but doesn’t meet the threshold for blocking or quarantining, it tags the message with a warning to the recipient that it may be suspicious

- If the gateway receives an outbound message with what appears t be sensitive data, its DLP may encrypt the message

How does the gateway make decisions?

- Text analysis: blocks spam messages, phishing attacks and unwanted content

- Signature detection: scans and monitors attachments and malicious files

- URL filtering: scans URLs in emails for links to known malware sites or other nasty destinations

Secure Email Gateways can be cloud based or on premise.

2.4.6 Data Sanatisation Tools

Erasing files from a disk does not completely remove the data they contain. It leaves remnants that may be accessible using special tools. Data Sanatisation corrects this problem by completely removing data from devices.

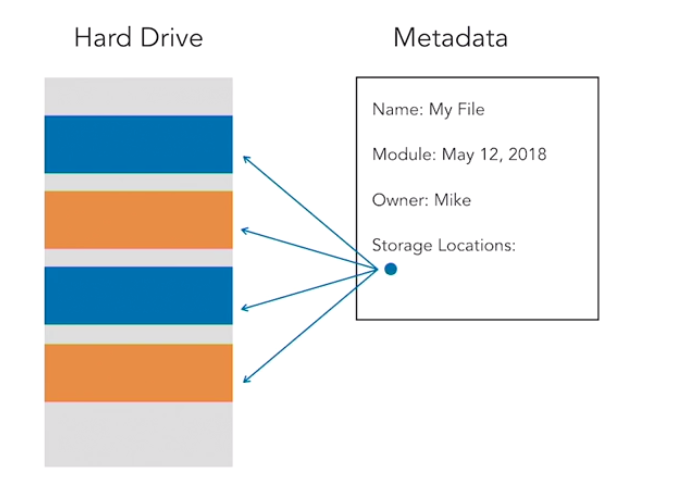

How Data is Stored on a Disk

When you store a file on a disk the operating system may store pieces of it in different physical locations on the drive. These locations are based on what space is available on the disk at the time. The operating system needs some way to pull this all back together and present it to you in its original form. It does this by using file system information known as metadata. This includes information about the file such as:

- Name of file

- Creation date

- Access permissions

- Storage locations of the file

In Linux this is stored in what is called inodes, in Windows it is stored in a master file table.

When you delete the file the operating system simply removes the table entry relating to that file so that it no longer appears in directory listings and the storage space is available for use by other files. If you then threw away that disk someone would be able to look at the raw data and reassemble the contents of the file.

Disk Sanatisation

This goes a step further than simply removing the files metadata. These programs overwrite each location on the disk where data was stored with ones, zeros, or random information. The obliterates the information that was stored on the disk so it cant be reconstructed.

EXAM TIPS: Multiple overwrites are not necessary with modern hard drives. A single overwrite will suffice. Some older documentation still says you need to do it multiple times

Disk Sanatisation Tools

Apple Mac: there is the built in utility “Disk Utility”. Windows: there is no built in utility but plenty of third party programs. These include:

- Disk Wipe http://www.diskwipe.org/

- DBAN (Darik’s Boot and Nuke)

2.4.7 Steganography

Steganography is the process of hiding information within another file, so it is not visible to the naked eye. It is a valuable communication secrecy tool. Its like the digital form of invisible ink.

Steganography Techniques

One of the most common techniques is hiding text within an image file. High resolution image files contain millions of pixels. A 30 megapixel image has 30 million individual pixels. These pixels can be used as a hiding space for information. If you change the shade of a couple of thousand of those pixels, just very slightly, you could hide some text in the image. This would not be visible to the human eye but the text could be retrieved using special software.

“Quick Stego” is a program that can be used to embed text in an image and it is hidden from the naked eye. This software will also retrieve the text.